Difference between revisions of "AR Drone 2/Mapping"

Koudshoorn (talk | contribs) |

IHaveADrone (talk | contribs) m (spelling etc.) |

||

| (15 intermediate revisions by one other user not shown) | |||

| Line 1: | Line 1: | ||

<categorytree style="float:right; clear:right; margin-left:1ex; border: 1px solid gray; padding: 0.7ex;" mode=pages>AR Drone 2</categorytree> | <categorytree style="float:right; clear:right; margin-left:1ex; border: 1px solid gray; padding: 0.7ex;" mode=pages>AR Drone 2</categorytree> | ||

One of the functions that the AR.Drone2 should have is to be able to map a certain area (starting off with a room). In order to do this the AR.Drone2 needs to be able to: measure distance to objects, keep track of it's own position, | <span style="color:red">'''Mapping has not been integrated with paparazzi due to lack of time. Yet, all components have been tested and are working properly.'''</span> | ||

One of the functions that the AR.Drone2 should have is to be able to map a certain area (starting off with a room). In order to do this the AR.Drone2 needs to be able to: measure distance to objects, keep track of it's own position and heading, store a map efficiently and avoid crashing into objects. The first two things are needed to create a map from measurements during flight, the third is to use a map and still be able to go through all the control loops of paparazzi, the fourth is to maintain flight. It would also be useful to make an algorithm for the AR.Drone2 to explore the area and store the map in the most efficient way. | |||

== Storing the map== | == Storing the map== | ||

For storing the map, we | For storing the map, at first we believed [http://octomap.sf.net/ Octomap] ([http://ais.informatik.uni-freiburg.de/publications/papers/wurm10octomap.pdf paper]) to be a good solution. It's a datastructure using an [http://en.wikipedia.org/wiki/Octree OcTree] to store a 3D map that is updateable, flexible and compact. However, since we want to fly in a 2D manner, it's not very useful. You'd have to retrieve a 2D slice of the map at a certain hight (for which there is no build-in function) every iteration which would take a lot of unnecessary time. | ||

Since we liked the flexibility of the Octomap, we created our own 2D version of it: a quadmap so to speak. Instead of an octree, it uses a quadtree to store information about the map. This way we represent the map as a square instead of a cube, like the Octomap does. The rest is pretty much the same: we keep a record of all the occupied, free and unknown cells and we can update the map by casting rays from a startingpoint towards an endpoint (with endpoint being occupied or not). | |||

== Exploring the area== | == Exploring the area== | ||

Since the AR.Drone2 will only have point measurement sensors to measure distance to objects it is | Since the AR.Drone2 will only have point measurement sensors to measure distance to objects, it is pretty much impossible to create a 3D map. Flying on the same hight all the time, will make a 2D map sufficient for the job. We're using [http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=613851 Frontier-Based Exploration] for this purpose. With this approach you start with a map filled with unknown cells. Then, by scanning the surroundings of the robot, you fill in the unknown cells: occupied or unoccupied. Next, you search all the frontiers (the border between unoccupied and unknown cells) and pick the frontier with the optimum of being the largest and with the least travel distance. Subsequently you take that frontier as your next destination and upon arrival you scan again. There are also variations for 3D ([http://www.informatik.uni-freiburg.de/~ki/papers/dornhege_etal_ssrr11.pdf paper]). But this is based on a wide range sensor (kinect for example) and therefore not suited for our AR.Drone2. | ||

As explained, Frontier-Based Exploration consists out of a few steps that will be repeated until the whole area is explored: | As explained, Frontier-Based Exploration consists out of a few steps that will be repeated until the whole area is explored: | ||

* Scan | * Scan | ||

* Search for frontiers | * Search for frontiers | ||

* Pick best frontier | * Pick best frontier | ||

* Travel | * Travel | ||

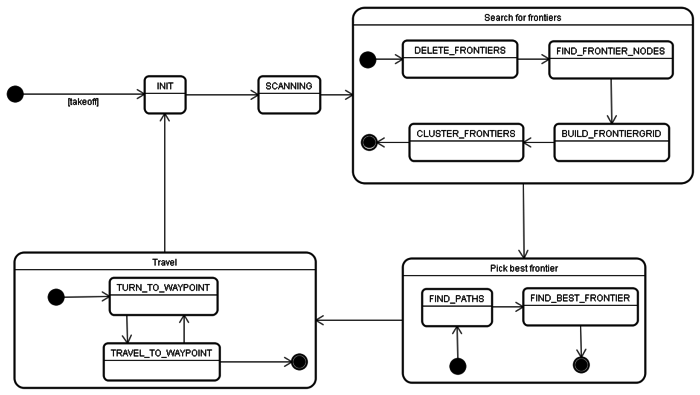

=== | We can use these steps to create a statemachine. Since paparazzi needs to go through its control loops to keep the drone in the air (and because it's a single threaded system), we need to execute the mapping algorithm in smaller steps and thus keep track of what state we are. | ||

[[Image:statediagram_mapping.png|thumb|center|700px|Statediagram of Frontier-Based Exploration with the AR.Drone2]] | |||

As you can see, some of the steps of Frontier-Based Exploration have been split up into smaller substates. This way we can see more easily what the program is supposed to do while keeping the operation time per iteration to a minimum. Note however that, while true for some, not all states shift to the next after one iteration. In some cases, FIND_PATHS for example, it will take a few iterations before it will shift. | |||

=== Init=== | |||

Inside the mapping algorithm, we not only keep track of the state, we also keep track of other things like indexes, iterator positions etc. In the init state, all these fields are set to their respective starting values, after which the algorithm enters the next state. | |||

=== Scanning=== | |||

Some robots can scan entire areas while moving with wide range sensors. Our Ar.Drone2 is limited to point measurement sensors and therefore has to have an explicit step "Scan". Seeing as our drone will have 4 horizontally placed sensors in a + sign, a 360 degrees scan can be made by making a 45 degrees turn. By keeping track of our rotation and position, with the use of internal sensors, we can deduct where the scanned points are in our selfmade map. | Some robots can scan entire areas while moving with wide range sensors. Our Ar.Drone2 is limited to point measurement sensors and therefore has to have an explicit step "Scan". Seeing as our drone will have 4 horizontally placed sensors in a + sign, a 360 degrees scan can be made by making a 45 degrees turn. By keeping track of our rotation and position, with the use of internal sensors, we can deduct where the scanned points are in our selfmade map. | ||

=== Search for frontiers=== | === Search for frontiers=== | ||

In "Search for frontiers", we obviously search for frontiers. As the paper of Frontier-Based Exploration explains, we will use these frontiers to deduct our next goal to travel to. As can be seen in the statediagram, we created substates for "Search for frontiers" (DELETE_FRONTIERS, FIND_FRONTIER_NODES, BUILD_FRONTIERGRID and CLUSTER_FRONTIERS) for reasons explained earlier. | |||

==== DELETE_FRONTIERS==== | |||

In the mapping algorithm, we maintain a list of frontierpoints (2D points to represent position) for the sake of speed. That way, we only have to check the area, obtained during state Scanning, for new frontiers instead of going over the whole map. But because of that, we also need to remove any frontierpoints when they´re not frontiers anymore. DELETE_FRONTIERS is the state where every frontierpoint in the list is checked, to see whether it's still a frontier or not. | |||

==== FIND_FRONTIER_NODES==== | |||

When all the outdated frontiers are deleted, we need to add the newly created frontiers. This happens in FIND_FRONTIER_NODES. Because the area that got updated in Scanning is the only area that got updated, we only have to search for new frontierpoints in there. Those frontierpoints will then be added to the list. | |||

==== BUILD_FRONTIERGRID==== | |||

With the frontierpoint list we build a 2D grid representation of the map and fill it with frontiers on their respected positions (positions without frontiers are null pointers). This grid will be used in the next state to cluster them all together. | |||

=== | ==== CLUSTER_FRONTIERS==== | ||

In this state, the frontiers will be clustered together using a [http://en.wikipedia.org/wiki/Disjoint-set_data_structure Union-Find Algorithm]. With the list of frontierpoints, we can check the surrounding tiles of that position within the frontiergrid to find neighbouring frontiers. These neighbours are then clustered together. When the size of a cluster reaches a certain threshold, it is added to a list of clustered frontiers. The threshold has two advantages. One of them is that the drone will never visit frontiers that are too small to be of any use. The other advantage is to keep the amount of checks for redundant clusters in the list low. You see, when two clusters, that aren't the same, have been added to the list and later on these two clusters have formed 1 cluster, you'll have two of the same cluster in the list and one will have to be removed. With the threshold it will happen less often that two clusters inside the list will be unified. | |||

=== Pick best frontier=== | === Pick best frontier=== | ||

Having obtained several clusters of frontiers, we can now use these to deduct our next goal location. By normalizing the size of the frontiers and traveling distance towards them, we can pick the frontier with the optimum of small traveling distance and large size. However, before we can know the traveling distance towards a frontier we need to know the path towards it. For this we apply pathfinding in the FIND_PATHS state using the [http://www.policyalmanac.org/games/aStarTutorial.htm A* algorithm], we choose this algorithm mainly for it's simplicity. To make travelling along the path easier, we trim the found paths to a set of waypoints (not tile by tile). After all the paths have been found, the best frontier will be picked in the FIND_BEST_FRONTIER state. | |||

=== Travel=== | === Travel=== | ||

Now knowing where the drone should be headed to, we can start following the path we calculated earlier. Since the path exists out of waypoints, the easiest way of traveling is by turning towards the waypoint (TURN_TO_WAYPOINT), followed by moving forward until waypoint is reached (TRAVEL_TO_WAYPOINT). When the waypoint is reached you take the next waypoint and travel to that one, until there are no more waypoints left and you should have arrived at the goal. | |||

== Integration with Paparazzi== | |||

Since we had a hard time to get the AR.Drone2 flying, we ended up with too little time to integrate the mapping algorithm with Paparazzi. We did however work out how we wanted to integrate it. Our ideas will be explained in this section, just keep in mind that most of it is speculation. | |||

For the mapping algorithm to work with Paparazzi, we need to be able to do a few things: | |||

* Keep track of position and heading | |||

* Pilot the AR.Drone2 | |||

* Read out the [[AR_Drone_2/Multidirectional_distance_measurement| distance sensor data]] | |||

* Let Paparazzi call the algorithm in an iterative way | |||

The first and third bullets are necessary to be able to know which maptiles to update. Keeping track of position and heading is also important for being able to calculate the next action to be taken by the drone (it's hard to figure out how to get somewhere if you don't know where you are). The second bullet is needed to be able to fly to where we want, how we want. The last thing we need is to execute the mapping algorithm, since Paparazzi is the main program, it will have to execute the mapping algorithm (and not the other way around). | |||

=== Keep track of position and heading=== | |||

Paparazzi itself keeps track of a position and heading according to gps (this is all located in sw/airborne/state.h). Although the heading is no problem (you can keep the map pointed north that way), it's hard to convert gps coordinates into map coordinates. But there's another solution to this: if we take the starting position as [0, 0] we can then update the position by integrating the velocity every iteration. Although the outcome won't be 100% correct, the error will be kept to a minimum with a high enough update rate. In case velocity isn't updated fast enough we can also try using the acceleration from the accelerometers to update position. | |||

=== Pilot the AR.Drone2=== | |||

Flying with Paparazzi currently works in two different ways: autonomous with a [[Flight_Plans| flight plan]] or on radio control with support of the autopilot until a certain degree. Flight plan based will mean that we'd have to be able to update the flight plan while flying from within the mapping algorithm. This is a hard thing to do since the flight plan is located in the ground station and the mapping algorithm will be working on the AR.Drone2 (the airborne part). Though it would be very nice and would also take away the first problem of keeping track of your own position (the flight plan can cooperate with relative x and y values). | |||

The second option is a lot easier to implement, although more prone to errors in the positioning. When using radio control, Paparazzi sends the radio control values from the ground station to the airborne. There, a struct gets filled with the values, which in turn gets handled by the autopilot. By using the right [[Rotorcraft_Configuration#Autopilot_modes| autopilot mode]] we can fly stable to the locations we want (AP_MODE_HOVER_Z_HOLD for yawing and AP_MODE_ATTITUDE_Z_HOLD for moving). Note however that by using the radio control struct you can only use 3 different autopilot modes (these are described in the [[Rotorcraft_Configuration| airframe]]). | |||

=== Read out the distance sensor data=== | |||

To keep it simple, we wanted to directly access the data from the sensors. It is of course possible to integrate the sensors with Paparazzi as well, but Paparazzi itself has no need for distance measurement. | |||

== | === Let Paparazzi call the algorithm in an iterative way=== | ||

To let Paparazzi use the mapping algorithm, we can implement mapping as a [[Subsystem| subsystem]]. That way mapping can be added optionally to the airframe (just like gps is optional). Since the mapping algorithm is to be called in an iterative way, we can tell the main control loop to call the algorithm and we're done. | |||

[[Category: AR Drone 2]] | [[Category: AR Drone 2]] | ||

Latest revision as of 09:55, 8 July 2016

Mapping has not been integrated with paparazzi due to lack of time. Yet, all components have been tested and are working properly.

One of the functions that the AR.Drone2 should have is to be able to map a certain area (starting off with a room). In order to do this the AR.Drone2 needs to be able to: measure distance to objects, keep track of it's own position and heading, store a map efficiently and avoid crashing into objects. The first two things are needed to create a map from measurements during flight, the third is to use a map and still be able to go through all the control loops of paparazzi, the fourth is to maintain flight. It would also be useful to make an algorithm for the AR.Drone2 to explore the area and store the map in the most efficient way.

Storing the map

For storing the map, at first we believed Octomap (paper) to be a good solution. It's a datastructure using an OcTree to store a 3D map that is updateable, flexible and compact. However, since we want to fly in a 2D manner, it's not very useful. You'd have to retrieve a 2D slice of the map at a certain hight (for which there is no build-in function) every iteration which would take a lot of unnecessary time.

Since we liked the flexibility of the Octomap, we created our own 2D version of it: a quadmap so to speak. Instead of an octree, it uses a quadtree to store information about the map. This way we represent the map as a square instead of a cube, like the Octomap does. The rest is pretty much the same: we keep a record of all the occupied, free and unknown cells and we can update the map by casting rays from a startingpoint towards an endpoint (with endpoint being occupied or not).

Exploring the area

Since the AR.Drone2 will only have point measurement sensors to measure distance to objects, it is pretty much impossible to create a 3D map. Flying on the same hight all the time, will make a 2D map sufficient for the job. We're using Frontier-Based Exploration for this purpose. With this approach you start with a map filled with unknown cells. Then, by scanning the surroundings of the robot, you fill in the unknown cells: occupied or unoccupied. Next, you search all the frontiers (the border between unoccupied and unknown cells) and pick the frontier with the optimum of being the largest and with the least travel distance. Subsequently you take that frontier as your next destination and upon arrival you scan again. There are also variations for 3D (paper). But this is based on a wide range sensor (kinect for example) and therefore not suited for our AR.Drone2.

As explained, Frontier-Based Exploration consists out of a few steps that will be repeated until the whole area is explored:

- Scan

- Search for frontiers

- Pick best frontier

- Travel

We can use these steps to create a statemachine. Since paparazzi needs to go through its control loops to keep the drone in the air (and because it's a single threaded system), we need to execute the mapping algorithm in smaller steps and thus keep track of what state we are.

As you can see, some of the steps of Frontier-Based Exploration have been split up into smaller substates. This way we can see more easily what the program is supposed to do while keeping the operation time per iteration to a minimum. Note however that, while true for some, not all states shift to the next after one iteration. In some cases, FIND_PATHS for example, it will take a few iterations before it will shift.

Init

Inside the mapping algorithm, we not only keep track of the state, we also keep track of other things like indexes, iterator positions etc. In the init state, all these fields are set to their respective starting values, after which the algorithm enters the next state.

Scanning

Some robots can scan entire areas while moving with wide range sensors. Our Ar.Drone2 is limited to point measurement sensors and therefore has to have an explicit step "Scan". Seeing as our drone will have 4 horizontally placed sensors in a + sign, a 360 degrees scan can be made by making a 45 degrees turn. By keeping track of our rotation and position, with the use of internal sensors, we can deduct where the scanned points are in our selfmade map.

Search for frontiers

In "Search for frontiers", we obviously search for frontiers. As the paper of Frontier-Based Exploration explains, we will use these frontiers to deduct our next goal to travel to. As can be seen in the statediagram, we created substates for "Search for frontiers" (DELETE_FRONTIERS, FIND_FRONTIER_NODES, BUILD_FRONTIERGRID and CLUSTER_FRONTIERS) for reasons explained earlier.

DELETE_FRONTIERS

In the mapping algorithm, we maintain a list of frontierpoints (2D points to represent position) for the sake of speed. That way, we only have to check the area, obtained during state Scanning, for new frontiers instead of going over the whole map. But because of that, we also need to remove any frontierpoints when they´re not frontiers anymore. DELETE_FRONTIERS is the state where every frontierpoint in the list is checked, to see whether it's still a frontier or not.

FIND_FRONTIER_NODES

When all the outdated frontiers are deleted, we need to add the newly created frontiers. This happens in FIND_FRONTIER_NODES. Because the area that got updated in Scanning is the only area that got updated, we only have to search for new frontierpoints in there. Those frontierpoints will then be added to the list.

BUILD_FRONTIERGRID

With the frontierpoint list we build a 2D grid representation of the map and fill it with frontiers on their respected positions (positions without frontiers are null pointers). This grid will be used in the next state to cluster them all together.

CLUSTER_FRONTIERS

In this state, the frontiers will be clustered together using a Union-Find Algorithm. With the list of frontierpoints, we can check the surrounding tiles of that position within the frontiergrid to find neighbouring frontiers. These neighbours are then clustered together. When the size of a cluster reaches a certain threshold, it is added to a list of clustered frontiers. The threshold has two advantages. One of them is that the drone will never visit frontiers that are too small to be of any use. The other advantage is to keep the amount of checks for redundant clusters in the list low. You see, when two clusters, that aren't the same, have been added to the list and later on these two clusters have formed 1 cluster, you'll have two of the same cluster in the list and one will have to be removed. With the threshold it will happen less often that two clusters inside the list will be unified.

Pick best frontier

Having obtained several clusters of frontiers, we can now use these to deduct our next goal location. By normalizing the size of the frontiers and traveling distance towards them, we can pick the frontier with the optimum of small traveling distance and large size. However, before we can know the traveling distance towards a frontier we need to know the path towards it. For this we apply pathfinding in the FIND_PATHS state using the A* algorithm, we choose this algorithm mainly for it's simplicity. To make travelling along the path easier, we trim the found paths to a set of waypoints (not tile by tile). After all the paths have been found, the best frontier will be picked in the FIND_BEST_FRONTIER state.

Travel

Now knowing where the drone should be headed to, we can start following the path we calculated earlier. Since the path exists out of waypoints, the easiest way of traveling is by turning towards the waypoint (TURN_TO_WAYPOINT), followed by moving forward until waypoint is reached (TRAVEL_TO_WAYPOINT). When the waypoint is reached you take the next waypoint and travel to that one, until there are no more waypoints left and you should have arrived at the goal.

Integration with Paparazzi

Since we had a hard time to get the AR.Drone2 flying, we ended up with too little time to integrate the mapping algorithm with Paparazzi. We did however work out how we wanted to integrate it. Our ideas will be explained in this section, just keep in mind that most of it is speculation.

For the mapping algorithm to work with Paparazzi, we need to be able to do a few things:

- Keep track of position and heading

- Pilot the AR.Drone2

- Read out the distance sensor data

- Let Paparazzi call the algorithm in an iterative way

The first and third bullets are necessary to be able to know which maptiles to update. Keeping track of position and heading is also important for being able to calculate the next action to be taken by the drone (it's hard to figure out how to get somewhere if you don't know where you are). The second bullet is needed to be able to fly to where we want, how we want. The last thing we need is to execute the mapping algorithm, since Paparazzi is the main program, it will have to execute the mapping algorithm (and not the other way around).

Keep track of position and heading

Paparazzi itself keeps track of a position and heading according to gps (this is all located in sw/airborne/state.h). Although the heading is no problem (you can keep the map pointed north that way), it's hard to convert gps coordinates into map coordinates. But there's another solution to this: if we take the starting position as [0, 0] we can then update the position by integrating the velocity every iteration. Although the outcome won't be 100% correct, the error will be kept to a minimum with a high enough update rate. In case velocity isn't updated fast enough we can also try using the acceleration from the accelerometers to update position.

Pilot the AR.Drone2

Flying with Paparazzi currently works in two different ways: autonomous with a flight plan or on radio control with support of the autopilot until a certain degree. Flight plan based will mean that we'd have to be able to update the flight plan while flying from within the mapping algorithm. This is a hard thing to do since the flight plan is located in the ground station and the mapping algorithm will be working on the AR.Drone2 (the airborne part). Though it would be very nice and would also take away the first problem of keeping track of your own position (the flight plan can cooperate with relative x and y values).

The second option is a lot easier to implement, although more prone to errors in the positioning. When using radio control, Paparazzi sends the radio control values from the ground station to the airborne. There, a struct gets filled with the values, which in turn gets handled by the autopilot. By using the right autopilot mode we can fly stable to the locations we want (AP_MODE_HOVER_Z_HOLD for yawing and AP_MODE_ATTITUDE_Z_HOLD for moving). Note however that by using the radio control struct you can only use 3 different autopilot modes (these are described in the airframe).

Read out the distance sensor data

To keep it simple, we wanted to directly access the data from the sensors. It is of course possible to integrate the sensors with Paparazzi as well, but Paparazzi itself has no need for distance measurement.

Let Paparazzi call the algorithm in an iterative way

To let Paparazzi use the mapping algorithm, we can implement mapping as a subsystem. That way mapping can be added optionally to the airframe (just like gps is optional). Since the mapping algorithm is to be called in an iterative way, we can tell the main control loop to call the algorithm and we're done.